Email A/B Testing Explained - Examples, Ideas and Tips

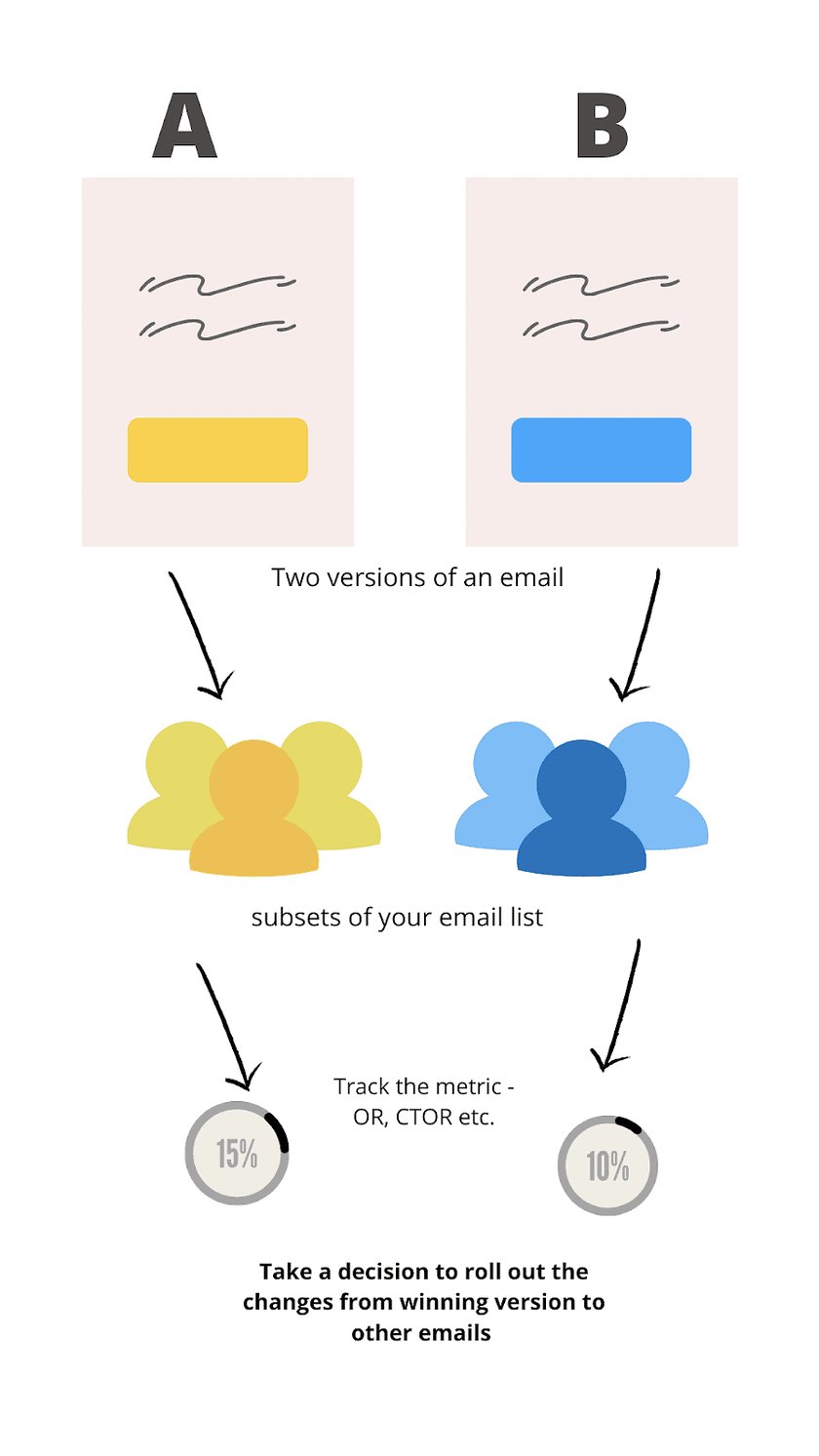

In email marketing, A/B testing is the process of sending one variation of your email to a subset of your subscribers and a different variation to another subset of subscribers and then looking at results to see which one performed better. The winning variation is then sent to all of the remaining subscribers on your list.

A/B testing can be done on all individual elements of an email like subject line, preview text, from name, or on the whole email such as changing the template, long copy vs short copy.

In this guide, we will look at what you should test, and tips for running an effective a/b test. These frameworks and tips can be used to increase the open rate, engagement, and overall ROI of your emails for any brand or industry.

Considering the importance of A/B testing, for those who need to send a high volume of emails, it will be well-advised that you try a VIP or deliverability service like the one offered by SendX. Contact us to know more.

One mantra we tell everyone trying to improve their email marketing efforts ROI by just looking at test data published by other brands or industries is - Do your own test.

That's because the results may vary from one brand or industry to another. For example, a short copy might get a better click-to-open rate in eCommerce but might perform poorly with infomercial products. That's why instead of relying on generic research data, test with your audience and product to draw the correct conclusions.

Let's look at the 2 most important tips to follow before you start A/B testing

2 Things To Note Before A/B Testing

Have a clear hypothesis

The definition of hypothesis - explanation made on the basis of limited evidence as a starting point for further investigation.

It basically follows the structure: “If I change this, it will have this effect." It should be aimed at getting some insights.

For example, you saw a CTA that was very effective on you and you now want to a/b test it in your emails.

A bad hypothesis or no hypothesis statement is - 'Change the CTA copy because it works better'.

A good hypothesis would be 'Changing the CTA from Enroll Now to Learn More will reduce confusion and will increase email conversions.'

So, formulate your statement like this:

- Changing _______(element in your email) into ______(new idea you have) will ________ (end effect you want), because ________(your assumption).

Test one thing at a time

Test one thing at a time so that it is clear as to what affected the results. If you test multiple things at once e.g. you changed the CTA placement and also switched the copy from long to short, so you won't be sure which one of these increased or decreased your conversions. It’s important to choose the best possible data management tool that will complement and accommodate changes from your A/B test results.

For example, if you are comparing two different subject lines, you should keep the send time, from name, preview text same.

Now let's look at some of the ideas to help you get started with A/B testing.

What To A/B Test

From name

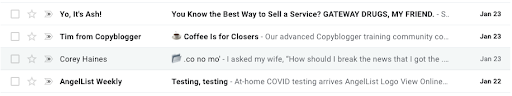

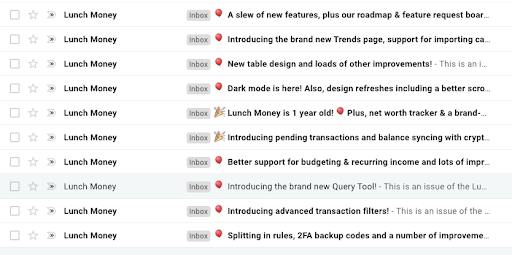

If you open your inbox and just observe the 'from name', you can find many variations.

Here is a screenshot from my inbox

Source: deliveroo email

We have an informal 'from name' (Yo, it's Ash), person's name along with company name (Tim from Copyblogger), just person's name (Corey Haines) because they have a strong personal brand, Just brand name (Deliveroo).

Personally, I think that people are naturally more likely to open an email when it has an actual person’s name in it vs the name of a business.

But don't assume anything unless you test. For example, I recently observed that Lunch Money went from using Jen From Lunch Money to just Lunch Money. I am assuming the company name worked better for them.

Metrics to measure the result: Open Rate

Subject line

Try personalizations in your subject line by including the subscriber's name. It won't work if you overdo it and use it every time. Test using it for some of the emails that require immediate action or if you are launching something new where you need maximum eyeballs

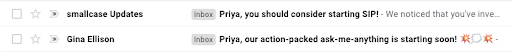

The use of emojis in the subject line has shown higher open rates but when every other brand starts doing that, the inbox feels cluttered and the novelty of an emoji wears off.

The trick to using emojis correctly is:

- Use them not too often and not overdoing it with too many emojis that it feels spammy

- Own an emoji and use the same one all the time (See how Google Maps uses the earth emoji every time or Morning Brew uses the coffee emoji)

- Sticking to a theme (Calm mostly uses a heart emoji and just varies the colors)

- Instead of using it at the front of the subject line, try using it at the end (see ClassPass email)

You can also test short vs long subject lines. For example, Morning Brew uses 2-3 word subject lines vs Lunch Money has longer subject lines.

Metrics to measure the result: Open Rate

Long copy vs Short copy

A lot of marketers who aren’t copywriters are certain that short copy will win over long copy every time.

The problem is that logic isn’t irrefutable, and there are case studies to prove it. Copyhacker’s case study about 3.5xing Wistia’s paid conversions from email is a perfect example. When Joanna first showed the test version to Wistia, they immediately objected with “that copy is longer than we’re used to”.)

And look what happened.

Sometimes people don’t act because they aren’t adequately persuaded. It calls for a longer, more detailed copy.

The question is what should you include in that extra copy? Yep, that’s what you’ll be testing.

Common examples of additional copy include:

- Customer testimonials

- A longer list of all the features or benefits associated with your product or service

- Stories that explain, in detail, how your product or service helped people overcome a problem or achieve their goal

- Stats and data that reinforce the need for your product or service

- Frequently asked questions (with answers)

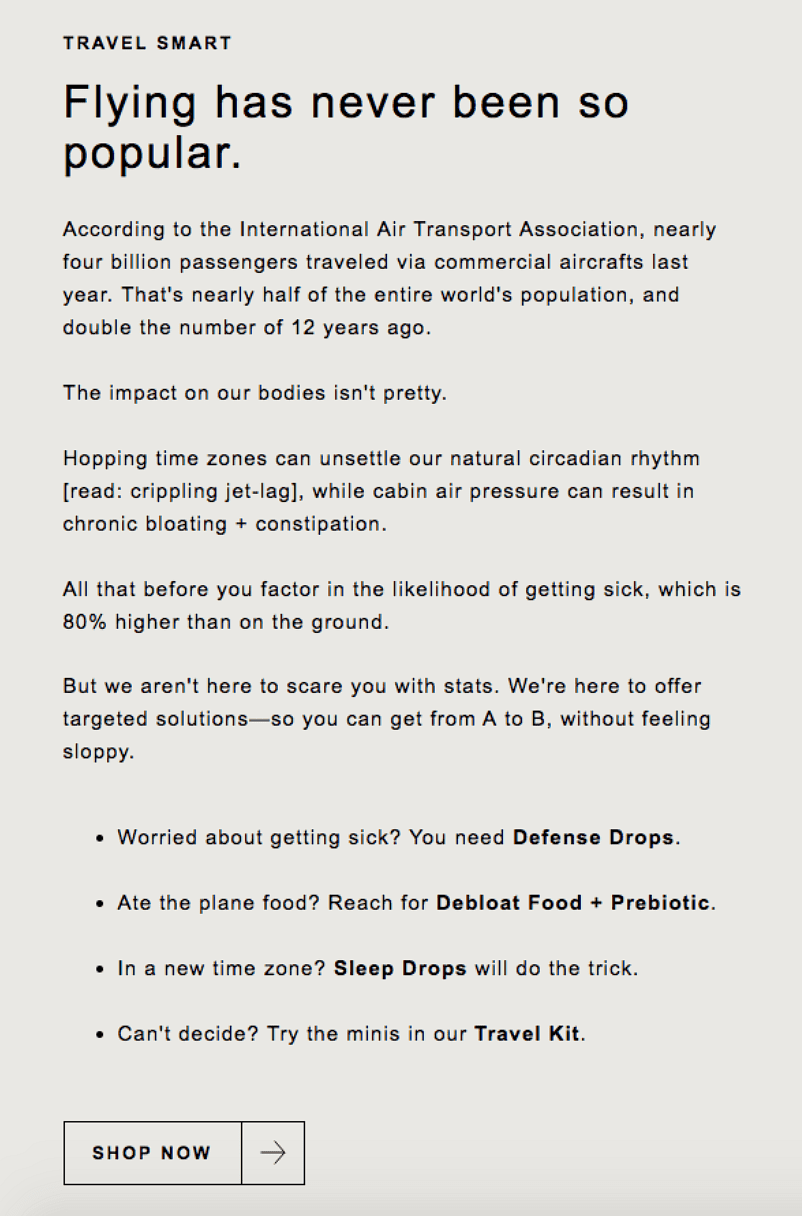

Here’s an example from The Nue Co. It's an eCommerce brand, an industry that’s prone to short copy. Check out what this company does instead:

Rather than just having a well-designed image and a brief description of the product, The Nue Co. adds some extra copy to clarify the problem their products are solving.

If you’re not sure what to add to your extra copy, do a quick survey at the end of the nurture campaign you want to optimize. Make it one question, and ask “What’s stopping you from doing x?” (In this context, “x” is whatever action you want people to take.

Use the answers from that survey to uncover what’s holding people up, and then test some additional copy and see if your results improve.

Metric to measure the result: Click-through-Rate

CTA

The message, images, and tone of your email definitely influence people to read it, but when it comes to eliciting action from readers, it's the 'call to action' that drives the response.

A CTA also has multiple variables to test. So pick one or a combination of two to optimize it for maximum clicks.

Here are the 5 variables you can test with CTAs in your emails:

1) Button vs text: A button might make your email feel less conversational, but it does make it absolutely clear to the readers about where to click.

The benefit of a text-based CTA is that you can embed it in your copy without breaking up the conversation. It's worth testing which one works best for the kind of emails you send.

2) Color: Colors can create perception and emotions. For example, red & purple show enthusiasm, green is for calmness, yellow & orange for enthusiasm, and gold for a feeling of luxury. Narrow down on the emotions you want to convey in your emails and text different colors in your CTA.

If you have an established color palette, generate some variations from that itself. And remember to keep it contrasted from the rest of the text and images so it doesn't blend.

You can test the color of both button-CTAs & text hyperlinks.

3) Copy

Changing your CTAs from 'Shop here' to 'Buy Now' will not make much of a difference. They are two different phrases with no distinctness. Here are two questions you can ask before formulating a hypothesis to test the text on your CTAs:

- What's the readers' motivation for clicking this button?

- What's the destination where the reader will go after they click this button?

Then create two copies to test out. For example, your copy can be either very direct -- 'Click here to watch the video' or show some benefits such as 'Watch this to level up your marketing.'

Here's an example of two different CTAs tested by Airbnb:

This is the CTA they sent when online experiences were just opening up:

Here's an email I received after a few weeks talking about online experiences but had a different CTA this time:

4) Placement

The placement of your CTA is as important as what it says. If the CTA is hidden between your images or not placed in places where people expect them to be - at the end of the email, or below the products you are selling - then it might not lead to a good click-to-open rate.

Test out whether sprinkling it in between your texts improves conversations or not. Find out if putting the CTA above the fold in your email (without the reader having to scroll all the way to the middle or bottom) is worth the effort and if it improves conversion.

Metric to measure the result: Click-through-Rate

5) Image vs No Image

The first level of image tests can be used to check whether images increase or decrease your click-to-open rate. Then if you discover that images help improve conversion, you can test with the kind of images used and placement of images. Naturally, your images must be high-quality so always edit them (e.g., background removal) with the best photo editing software you can find.

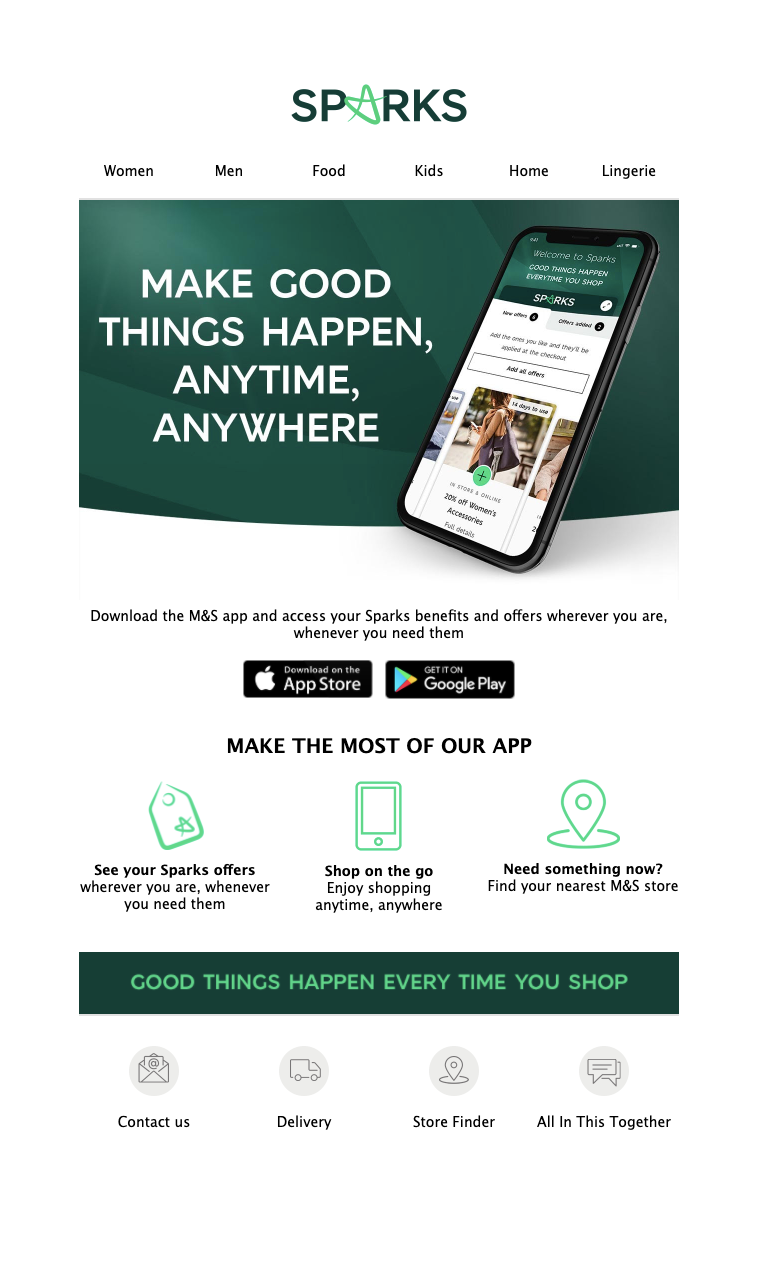

Here is an example of an email from M&S where one email has an image and that pushes the downloaded app below the fold. Then there is another email without an image that shows the downloaded app above the fold.

This can be tested to see which one gets you more clicks on the download app buttons.

6) The layout of your message

The layout of your email can be a single column structure which would increase the length of your email but show each section in its own row. Or it can be a 2-column structure where you split each row into two to show maximum content or products in limited space.

If you want to highlight just a single product, try a layout that highlights your product while having minimal space for copy.

The layout that will work for you will depend on the kind of industry and the amount of content you share in each email. Test by keeping your content, subject line, etc., the same but changing only the layout.

7) Time to send your email

There is enough data from studies to show what time of the day or day of the week works best for sending emails. Instead of relying on generic data from industries that might be very different from yours, do your own tests to see what works best.

Try analyzing the time of day when your emails are most opened. Then come up with 2-3 options and test them against your standard time. There would never be a perfect answer, but you can optimize based on the context of your emails and the behavior of your audience.

For example, for a B2B brand, Mondays or Tuesdays might be the best time to send emails, but if you are a travel agency then weekends might work better.

Metric to measure the result: Click-through-Rate

A/B Testing Is a Process

A/B testing is a constant process and should not be avoided if you have a small list or stopped because now you are a big brand. Online behavior of people keeps evolving and to keep up with that, you need to constantly keep track of what's working and what's not working.

Conversely, having a large email list will benefit equally or more from A/B testing too.

So, test early and test often for best results.

If you are looking for an email marketing software that can help you set up your tests without any technical difficulty or confusion, give SendX a try. You can sign up using your email ID and get a 14-day free trial that will give you access to all features (testing, segmentation, email creation etc.).

FAQs

1) What is email A/B testing?

Email A/B testing or split testing in email marketing is the process of sending one version (version A) of your email to a subset of your broadcast list and sending another version to another subset.

2) Why should I go for A/B testing?

Through A/B testing, you can increase the open rate, engagement, and overall ROI of your emails for any brand and industry. The behavior of the audience changes with change in technology, markets, and maturity of your product. So ‘Always Be Testing'.

3) What elements should be tested in A/B testing?

The most impactful elements that should be tested in A/B testing are:

- Subject line, measured by the open rate.

- CTA, measured by click-through rate.

- Send time, measured by open rate.

4) What metric should I track while running A/B tests?

While running A/B tests, choose a metric that will define the success of the test. For example, if you are testing two subject lines, make sure that you can track your open engagement rates. If you are testing the effect of long-form vs short-form copy, have a CTA in your email so that you know which one works better through click-to-open rate.