As email marketers, we constantly juggle design aesthetics, captivating copy, and that ever-elusive perfect subject line. But how do we truly determine which element elicits the best response from our audience?

That is where A/B testing comes into play.

A/B testing involves sending out two versions of an email and measuring which one receives a better response. It is a straightforward yet powerful way to fine-tune email campaigns based on real feedback.

In this guide, we will explore what A/B testing is and how you can use it to improve your email results. Whether you are just starting out or refining your strategies, this article provides the tools you need to make your campaigns more effective.

Table of Contents

What is A/B Testing?

Imagine you have an online shoe store and you want to send out an email about a weekend sale.

Version A (Email A):

Subject Line: “Huge Weekend Sale on All Shoes!”

Version B (Email B):

Subject Line: “Get 50% Off All Shoes This Weekend!”

You send Email A to 100 people and Email B to another 100 people. After a day, you see that 70 people opened Email A and 50 people clicked a link inside. For Email B, 80 people opened it and 60 people clicked a link.

Even though Email B had more opens, Email A generated more clicks. Therefore, you choose to send Email A to the remaining subscribers since it encouraged more engagement.

That is A/B testing.

A/B testing in email marketing compares two versions of an email to determine which performs better for specific metrics, such as open rates or click-through rates. Each version is sent to a subset of subscribers, and the results are analyzed to guide future campaigns.

It helps you figure out what your email subscribers prefer, so you can send them content they'll engage with.

Deciding What to Test for A/B Testing in Email Marketing

A/B testing is essential for optimizing email campaigns. Here is a systematic approach to deciding what to test:

1. Understand Your Metrics:

Identify which metrics matter most. Are you focused on improving open rates, click-through rates, or conversions? This clarity helps prioritize testing efforts.

2. Review Past Campaigns:

Analyze previous results to identify underperforming areas or patterns that suggest improvement opportunities.

3. Focus on High-Impact Elements:

Test the elements most visible to recipients:

• Subject lines

• Call-to-action (CTA) buttons or text

• Images and graphics

• Email body content

Example: Morning Brew tests multiple subject lines daily to determine reader preference

4. Listen to Your Audience:

Gather feedback directly from subscribers to identify unclear or unappealing content.

5. Form a Hypothesis:

Create a predictive statement linking cause and expected effect.

Formula: “If [specific change], then [expected outcome], because [rationale].”

Example: “If I include the recipient’s first name in the subject line, then open rates will increase, because personalized emails feel more relevant.”

6. Ensure Variations Are Distinct:

Make sure each version differs clearly so you can isolate the variable’s impact.

7. Limit Variables:

Test one element at a time to maintain accuracy.

8. Remember Context:

Account for external factors, such as holidays or industry events, which may affect engagement results.

6 Best Practices for Running A/B Tests in Email Marketing

1. Segment Your Audience Carefully:

Different segments behave differently. By grouping subscribers based on demographics or preferences, your tests yield more accurate, actionable insights.

If you’re a global brand, some regions may prefer a more direct marketing approach, while others might appreciate a softer touch. Testing an email's content or design in North America might yield different results than in Asia due to cultural nuances. By segmenting, you can pinpoint what works best for each specific audience.

2. Matched Groups:

Ensure test groups are identical except for the variable. Use random sampling to maintain demographic balance, preventing skewed results.

Random sampling can be a beneficial method here. If you know that 30% of your audience are in the age group 18-25 and 70% are 25 and above, maintain this ratio in both your test groups. This ensures that any observed difference is likely due to the variable you're testing, not underlying demographic differences.

3. Advanced Scheduling:

Send emails when your audience is most active. Analyze past campaigns to identify peak engagement times and schedule accordingly.

Analyze past email campaigns to determine peak engagement times. If Group A tends to open emails in the morning and Group B in the afternoon, schedule your tests accordingly. This maximizes the chances of your email being seen and acted upon.

4. Multivariate Testing for the Experienced:

While A/B testing isolates one change, multivariate testing examines multiple elements at once. For example, test both a new subject line and a CTA button to understand their combined effects. Ensure a large enough audience for reliable conclusions.

Suppose you want to test a new subject line and a new call-to-action button simultaneously. Multivariate testing would allow you to do this, showing combinations of both changes to different segments. But remember, you'll need a considerably larger audience to draw reliable conclusions since you're testing multiple combinations.

5. Minimize External Influences:

Avoid testing during major holidays or global events when subscriber behavior may be atypical.

If there’s a major holiday coming up, be aware that email engagement might be atypical during this period. People might be on vacation or busy with festivities, affecting open and click rates. Postpone your test or factor in these anomalies when analyzing results.

6. Duration & Consistency:

Avoid testing during major holidays or global events when subscriber behavior may be atypical.

For example, if you start an A/B test on a Monday and end it on a Wednesday, you might miss out on how subscribers engage with emails over the weekend. Aim for a full business cycle to ensure you're capturing a representative sample of user behavior. However, avoid stretching it too long, like over a month, as other external factors might come into play.

Future Trends in A/B Testing for Email Marketing

A/B testing, a cornerstone of digital marketing optimization, has evolved over the years and is set to experience even more advancements in the near future. Here's a glimpse into what lies ahead:

Real-time Optimization: Instead of waiting for the conclusion of A/B tests to decide on a winner, technologies are emerging that shift traffic in real-time to the better-performing variant, ensuring optimal user experience and conversion throughout the test.

Personalization at Scale: As businesses collect more data on their users, the future will focus on hyper-personalized experiences. Rather than just A/B testing, brands will create multiple variants tailored to different audience segments.

Integrated Testing Platforms: Tools will offer comprehensive solutions that merge A/B testing with other functionalities like heat mapping, session recordings, and user surveys, providing a holistic view of user behavior.

Predictive Analytics: Instead of solely relying on past data, businesses will use predictive analytics to forecast how certain changes can impact future user behavior, guiding the A/B testing process.

Predictive Personalization: With AI's capability to analyze vast amounts of data, it can predict the preferences of individual users, allowing for real-time customization of emails even before the user interacts with them.

Adaptive Learning: As opposed to traditional A/B testing where one variant is declared a winner at the end, machine learning algorithms can constantly adapt and tweak email parameters based on ongoing user interactions, ensuring continual optimization.

Final Thoughts

Effective email marketing relies on data-driven insights, not guesswork. A/B testing provides actionable data that helps marketers understand subscriber preferences and refine campaigns accordingly. As we discussed, implementing A/B testing can enhance your campaign's results. If you're looking for a tool to help streamline this process, consider using SendX.

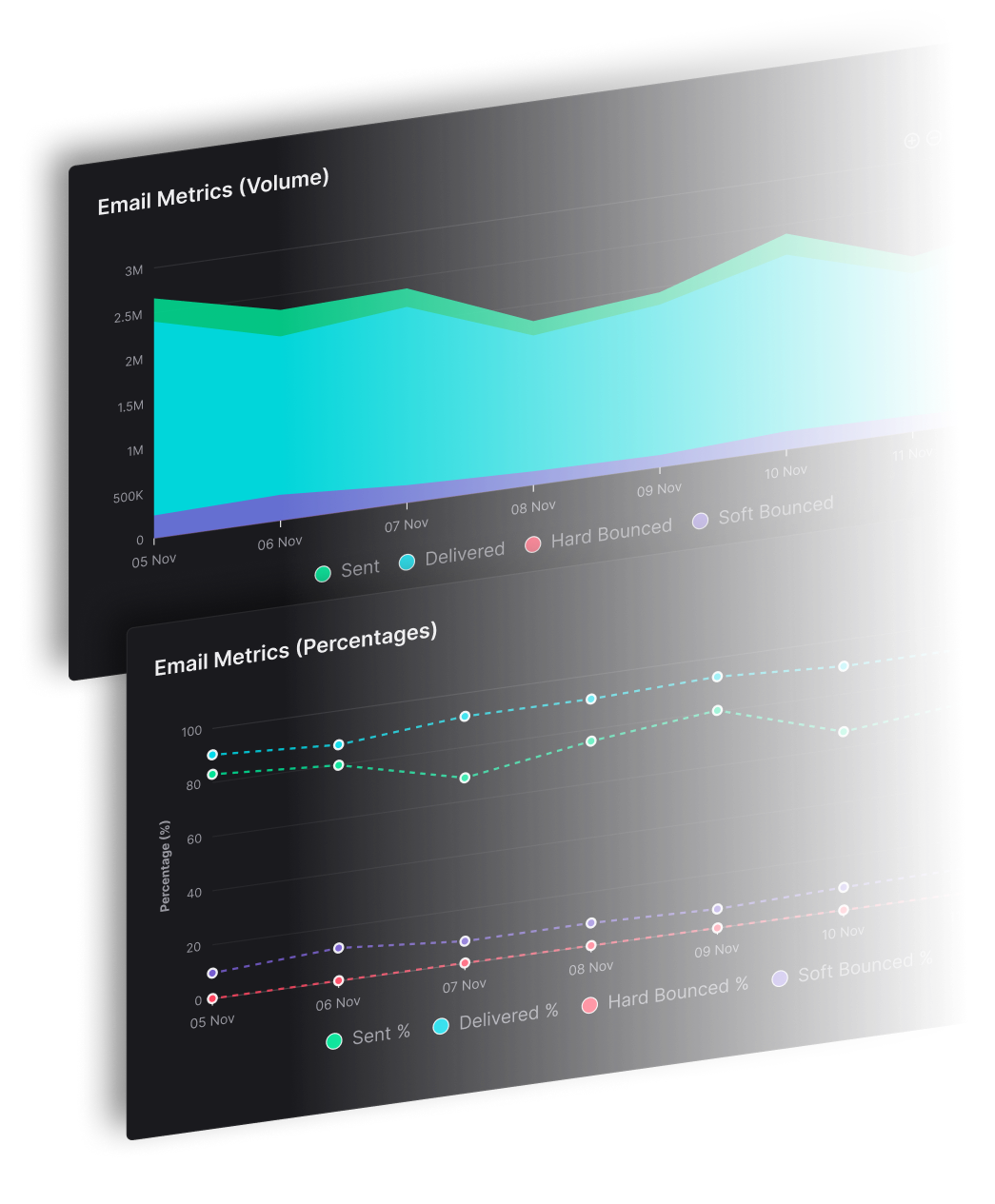

SendX is an email marketing platform for companies that send large numbers of emails and want a high deliverability. It's not just another platform; it's your email marketing Swiss Army knife. Whether you're A/B testing, segmenting your audience, or crafting the perfect automated sequence, SendX has got your back. Take a free trial here & test for yourself.